「ゼロから作るDeep Learning ① (Pythonで学ぶディープラーニングの理論と実装)」 p.229~233 の写経です。

ここまでで理解が深まった気がしますが、 様々、ありすぎて、理解できていない部分もあると思います。

# coding: utf-8 import sys, os import numpy as np import matplotlib.pyplot as plt import urllib.request import gzip import pickle from collections import OrderedDict def main(): # data読み込み (これまでの例と異なり、flatten=False ) mymnist = MyMnist() (x_train, t_train, x_test, t_test) = mymnist.load_mnist(flatten=False) # 時間のかかる場合、はデータを削減 x_train, t_train = x_train[:5000],t_train[:5000] x_test, t_test = x_test[:1000], t_test[:1000] max_epochs = 20 # 訓練/学習 network = SimpleConvNet(input_dim=(1,28,28), conv_param = {'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1}, hidden_size=100, output_size=10, weight_init_std=0.01) trainer = Trainer(network, x_train, t_train, x_test, t_test, epochs=max_epochs, mini_batch_size=100, optimizer='Adam', optimizer_param={'lr': 0.001}, evaluate_sample_num_per_epoch=1000) trainer.train() # パラメータの保存 network.save_params("params.pkl") print("Saved Network Parameters!") # グラフの描画 markers = {'train': 'o', 'test': 's'} x = np.arange(max_epochs) plt.plot(x, trainer.train_acc_list, marker='o', label='train', markevery=2) plt.plot(x, trainer.test_acc_list, marker='s', label='test', markevery=2) plt.xlabel("epochs") plt.ylabel("accuracy") plt.ylim(0, 1.0) plt.legend(loc='lower right') plt.show() class MyMnist: def __init__(self): pass def load_mnist(self,flatten=True): data_files = self.download_mnist() # convert numpy dataset = {} dataset['train_img'] = self.load_img( data_files['train_img'] ) dataset['train_label'] = self.load_label(data_files['train_label']) dataset['test_img'] = self.load_img( data_files['test_img'] ) dataset['test_label'] = self.load_label(data_files['test_label']) for key in ('train_img', 'test_img'): dataset[key] = dataset[key].astype(np.float32) dataset[key] /= 255.0 for key in ('train_label','test_label'): dataset[key]=self.change_one_hot_label( dataset[key] ) # 画像を一次元配列(平)にしない場合 if not flatten: for key in ('train_img', 'test_img'): dataset[key] = dataset[key].reshape(-1, 1, 28, 28) return (dataset['train_img'], dataset['train_label'], dataset['test_img'], dataset['test_label'] ) def change_one_hot_label(self,X): T = np.zeros((X.size, 10)) for idx, row in enumerate(T): row[X[idx]] = 1 return T def download_mnist(self): url_base = 'http://yann.lecun.com/exdb/mnist/' key_file = {'train_img' :'train-images-idx3-ubyte.gz', 'train_label':'train-labels-idx1-ubyte.gz', 'test_img' :'t10k-images-idx3-ubyte.gz', 'test_label' :'t10k-labels-idx1-ubyte.gz' } data_files = {} dataset_dir = os.path.dirname(os.path.abspath(__file__)) for data_name, file_name in key_file.items(): req_url = url_base+file_name file_path = dataset_dir + "/" + file_name request = urllib.request.Request( req_url ) response = urllib.request.urlopen(request).read() with open(file_path, mode='wb') as f: f.write(response) data_files[data_name] = file_path return data_files def load_img( self,file_path): img_size = 784 # = 28*28 with gzip.open(file_path, 'rb') as f: data = np.frombuffer(f.read(), np.uint8, offset=16) data = data.reshape(-1, img_size) return data def load_label(self,file_path): with gzip.open(file_path, 'rb') as f: labels = np.frombuffer(f.read(), np.uint8, offset=8) return labels # conv - relu - pool - affine - relu - affine - softmax class SimpleConvNet: def __init__(self, input_dim=(1, 28, 28), # チャンネル、高さ、幅 conv_param={ 'filter_num':30, 'filter_size':5, 'pad':0, #CNNのpadding 'stride':1}, #CNNのstride hidden_size=100, #隠れ層のneuron数 output_size=10, #出力層の〃 weight_init_std=0.01): #初期化時の重みの標準偏差 ※ # ※ relu or he の場合、「Heの初期値」 # sigmoid or xavierの場合、「Xavierの初期値」 filter_num = conv_param['filter_num'] filter_size = conv_param['filter_size'] filter_pad = conv_param['pad'] filter_stride = conv_param['stride'] input_size = input_dim[1] conv_output_size = \ (input_size - filter_size + 2*filter_pad) / filter_stride + 1 pool_output_size = \ int(filter_num * (conv_output_size/2) * (conv_output_size/2)) # 重みの初期化 self.params = {} self.params['W1'] = weight_init_std * \ np.random.randn(filter_num, input_dim[0], filter_size, filter_size) self.params['b1'] = np.zeros(filter_num) self.params['W2'] = weight_init_std * \ np.random.randn(pool_output_size, hidden_size) self.params['b2'] = np.zeros(hidden_size) self.params['W3'] = weight_init_std * \ np.random.randn(hidden_size, output_size) self.params['b3'] = np.zeros(output_size) # レイヤの生成 self.layers = OrderedDict() self.layers['Conv1'] = Convolution( self.params['W1'], self.params['b1'], conv_param['stride'], conv_param['pad']) self.layers['Relu1'] = Relu() self.layers['Pool1'] = Pooling(pool_h=2, pool_w=2, stride=2) self.layers['Affine1'] = Affine(self.params['W2'], self.params['b2']) self.layers['Relu2'] = Relu() self.layers['Affine2'] = Affine(self.params['W3'], self.params['b3']) self.last_layer = SoftmaxWithLoss() def predict(self, x): for layer in self.layers.values(): x = layer.forward(x) return x def loss(self, x, t): """損失関数を求める 引数のxは入力データ、tは教師ラベル """ y = self.predict(x) return self.last_layer.forward(y, t) def accuracy(self, x, t, batch_size=100): if t.ndim != 1 : t = np.argmax(t, axis=1) acc = 0.0 for i in range(int(x.shape[0] / batch_size)): tx = x[i*batch_size:(i+1)*batch_size] tt = t[i*batch_size:(i+1)*batch_size] y = self.predict(tx) y = np.argmax(y, axis=1) acc += np.sum(y == tt) return acc / x.shape[0] # 勾配(数値微分). x:入力data、t:教師label def numerical_gradient(self, x, t): loss_w = lambda w: self.loss(x, t) grads = {} for idx in (1, 2, 3): grads['W' + str(idx)] = \ self._numerical_gradient(loss_w, self.params['W' + str(idx)]) grads['b' + str(idx)] = \ self._numerical_gradient(loss_w, self.params['b' + str(idx)]) return grads def _numerical_gradient(self, f, x): h = 1e-4 # 0.0001 grad = np.zeros_like(x) it = np.nditer(x, flags=['multi_index'], op_flags=['readwrite']) while not it.finished: idx = it.multi_index tmp_val = x[idx] x[idx] = tmp_val + h fxh1 = f(x) # f(x+h) x[idx] = tmp_val - h fxh2 = f(x) # f(x-h) grad[idx] = (fxh1 - fxh2) / (2*h) x[idx] = tmp_val # 値を元に戻す it.iternext() return grad # 勾配 (誤差逆伝搬法). x:入力data、t:教師label def gradient(self, x, t): # forward self.loss(x, t) # backward dout = 1 dout = self.last_layer.backward(dout) layers = list(self.layers.values()) layers.reverse() for layer in layers: dout = layer.backward(dout) # 設定 grads = {} grads['W1'] = self.layers['Conv1'].dW grads['b1'] = self.layers['Conv1'].db grads['W2'] = self.layers['Affine1'].dW grads['b2'] = self.layers['Affine1'].db grads['W3'] = self.layers['Affine2'].dW grads['b3'] = self.layers['Affine2'].db return grads def save_params(self, file_name="params.pkl"): params = {} for key, val in self.params.items(): params[key] = val with open(file_name, 'wb') as f: pickle.dump(params, f) def load_params(self, file_name="params.pkl"): with open(file_name, 'rb') as f: params = pickle.load(f) for key, val in params.items(): self.params[key] = val for i, key in enumerate(['Conv1', 'Affine1', 'Affine2']): self.layers[key].W = self.params['W' + str(i+1)] self.layers[key].b = self.params['b' + str(i+1)] class Convolution: def __init__(self, W, b, stride=1, pad=0): self.W = W self.b = b self.stride = stride self.pad = pad # 中間data (backwardに使用) self.x = None self.col = None self.col_W = None # 重み・バイアスパラメータの勾配 self.dW = None self.db = None def forward(self, x): FN, C, FH, FW = self.W.shape N, C, H, W = x.shape out_h = 1 + int((H + 2*self.pad - FH) / self.stride) out_w = 1 + int((W + 2*self.pad - FW) / self.stride) col = im2col(x, FH, FW, self.stride, self.pad) col_W = self.W.reshape(FN, -1).T out = np.dot(col, col_W) + self.b out = out.reshape(N, out_h, out_w, -1).transpose(0, 3, 1, 2) self.x = x self.col = col self.col_W = col_W return out def backward(self, dout): FN, C, FH, FW = self.W.shape dout = dout.transpose(0,2,3,1).reshape(-1, FN) self.db = np.sum(dout, axis=0) self.dW = np.dot(self.col.T, dout) self.dW = self.dW.transpose(1, 0).reshape(FN, C, FH, FW) dcol = np.dot(dout, self.col_W.T) dx = col2im(dcol, self.x.shape, FH, FW, self.stride, self.pad) return dx class Pooling: def __init__(self, pool_h, pool_w, stride=2, pad=0): self.pool_h = pool_h self.pool_w = pool_w self.stride = stride self.pad = pad self.x = None self.arg_max = None def forward(self, x): N, C, H, W = x.shape out_h = int(1 + (H - self.pool_h) / self.stride) out_w = int(1 + (W - self.pool_w) / self.stride) col = im2col(x, self.pool_h, self.pool_w, self.stride, self.pad) col = col.reshape(-1, self.pool_h*self.pool_w) arg_max = np.argmax(col, axis=1) out = np.max(col, axis=1) out = out.reshape(N, out_h, out_w, C).transpose(0, 3, 1, 2) self.x = x self.arg_max = arg_max return out def backward(self, dout): dout = dout.transpose(0, 2, 3, 1) pool_size = self.pool_h * self.pool_w dmax = np.zeros((dout.size, pool_size)) dmax[np.arange(self.arg_max.size), self.arg_max.flatten()] = dout.flatten() dmax = dmax.reshape(dout.shape + (pool_size,)) dcol = dmax.reshape(dmax.shape[0] * dmax.shape[1] * dmax.shape[2], -1) dx = col2im(dcol,self.x.shape,self.pool_h,self.pool_w,self.stride,self.pad) return dx # input_data:(data数,チャンネル, 高さ, 幅) def im2col(input_data, filter_h, filter_w, stride=1, pad=0): N, C, H, W = input_data.shape # //は、切捨て整数除算 out_h = (H + 2*pad - filter_h)//stride + 1 out_w = (W + 2*pad - filter_w)//stride + 1 img = np.pad(input_data, [(0,0), (0,0), (pad, pad), (pad, pad)], 'constant') col = np.zeros((N, C, filter_h, filter_w, out_h, out_w)) for y in range(filter_h): y_max = y + stride*out_h for x in range(filter_w): x_max = x + stride*out_w col[:, :, y, x, :, :] = img[:, :, y:y_max:stride, x:x_max:stride] col = col.transpose(0, 4, 5, 1, 2, 3).reshape(N*out_h*out_w, -1) return col # input_shape: 入力data形状 例:(10,1,28,28) def col2im(col, input_shape, filter_h, filter_w, stride=1, pad=0): N, C, H, W = input_shape out_h = (H + 2*pad - filter_h)//stride + 1 out_w = (W + 2*pad - filter_w)//stride + 1 col = col.reshape(N,out_h,out_w,C,filter_h,filter_w).transpose(0,3,4,5,1,2) img = np.zeros((N, C, H + 2*pad + stride - 1, W + 2*pad + stride - 1)) for y in range(filter_h): y_max = y + stride*out_h for x in range(filter_w): x_max = x + stride*out_w img[:, :, y:y_max:stride, x:x_max:stride] += col[:, :, y, x, :, :] return img[:, :, pad:H + pad, pad:W + pad] class Trainer: def __init__(self, network, x_train, t_train, x_test, t_test, epochs=20, mini_batch_size=100, optimizer='SGD', optimizer_param={'lr':0.01}, evaluate_sample_num_per_epoch=None, verbose=True): self.network = network self.verbose = verbose self.x_train = x_train self.t_train = t_train self.x_test = x_test self.t_test = t_test self.epochs = epochs self.batch_size = mini_batch_size self.evaluate_sample_num_per_epoch = evaluate_sample_num_per_epoch # optimizer optimizer_class_dict = {'sgd':SGD, 'momentum':Momentum, 'nesterov':Nesterov, 'adagrad':AdaGrad, 'rmsprop':RMSprop, 'adam':Adam} self.optimizer = optimizer_class_dict[optimizer.lower()](**optimizer_param) self.train_size = x_train.shape[0] self.iter_per_epoch = max(self.train_size / mini_batch_size, 1) self.max_iter = int(epochs * self.iter_per_epoch) self.current_iter = 0 self.current_epoch = 0 self.train_loss_list = [] self.train_acc_list = [] self.test_acc_list = [] def train_step(self): batch_mask = np.random.choice(self.train_size, self.batch_size) x_batch = self.x_train[batch_mask] t_batch = self.t_train[batch_mask] grads = self.network.gradient(x_batch, t_batch) self.optimizer.update(self.network.params, grads) loss = self.network.loss(x_batch, t_batch) self.train_loss_list.append(loss) #if self.verbose: print("train loss:" + str(loss)) if self.current_iter % self.iter_per_epoch == 0: self.current_epoch += 1 x_train_sample, t_train_sample = self.x_train, self.t_train x_test_sample, t_test_sample = self.x_test, self.t_test if not self.evaluate_sample_num_per_epoch is None: t = self.evaluate_sample_num_per_epoch x_train_sample, t_train_sample = self.x_train[:t], self.t_train[:t] x_test_sample, t_test_sample = self.x_test[:t], self.t_test[:t] train_acc = self.network.accuracy(x_train_sample, t_train_sample) test_acc = self.network.accuracy(x_test_sample, t_test_sample) self.train_acc_list.append(train_acc) self.test_acc_list.append(test_acc) if self.verbose: print("epoch:", str(self.current_epoch), "train acc:",str(train_acc), "test acc:", str(test_acc) ) self.current_iter += 1 def train(self): for i in range(self.max_iter): self.train_step() test_acc = self.network.accuracy(self.x_test, self.t_test) if self.verbose: print("=============== Final Test Accuracy") print("test acc:" + str(test_acc)) # 確率的勾配降下法(Stochastic Gradient Descent) class SGD: def __init__(self, lr=0.01): self.lr = lr def update(self, params, grads): for key in params.keys(): params[key] -= self.lr * grads[key] class Momentum: def __init__(self, lr=0.01, momentum=0.9): self.lr = lr self.momentum = momentum self.v = None def update(self, params, grads): if self.v is None: self.v = {} for key, val in params.items(): self.v[key] = np.zeros_like(val) for key in params.keys(): self.v[key] = self.momentum*self.v[key] - self.lr*grads[key] params[key] += self.v[key] # http://arxiv.org/abs/1212.0901 class Nesterov: def __init__(self, lr=0.01, momentum=0.9): self.lr = lr self.momentum = momentum self.v = None def update(self, params, grads): if self.v is None: self.v = {} for key, val in params.items(): self.v[key] = np.zeros_like(val) for key in params.keys(): params[key] += self.momentum * self.momentum * self.v[key] params[key] -= (1 + self.momentum) * self.lr * grads[key] self.v[key] *= self.momentum self.v[key] -= self.lr * grads[key] class AdaGrad: def __init__(self, lr=0.01): self.lr = lr self.h = None def update(self, params, grads): if self.h is None: self.h = {} for key, val in params.items(): self.h[key] = np.zeros_like(val) for key in params.keys(): self.h[key] += grads[key] * grads[key] params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7) class RMSprop: def __init__(self, lr=0.01, decay_rate = 0.99): self.lr = lr self.decay_rate = decay_rate self.h = None def update(self, params, grads): if self.h is None: self.h = {} for key, val in params.items(): self.h[key] = np.zeros_like(val) for key in params.keys(): self.h[key] *= self.decay_rate self.h[key] += (1 - self.decay_rate) * grads[key] * grads[key] params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7) # http://arxiv.org/abs/1412.6980v8 class Adam: def __init__(self, lr=0.001, beta1=0.9, beta2=0.999): self.lr = lr self.beta1 = beta1 self.beta2 = beta2 self.iter = 0 self.m = None self.v = None def update(self, params, grads): if self.m is None: self.m, self.v = {}, {} for key, val in params.items(): self.m[key] = np.zeros_like(val) self.v[key] = np.zeros_like(val) self.iter += 1 lr_t = self.lr * np.sqrt(1.0 - self.beta2**self.iter) / \ (1.0 - self.beta1**self.iter) for key in params.keys(): #self.m[key] = self.beta1*self.m[key] + (1-self.beta1)*grads[key] #self.v[key] = self.beta2*self.v[key] + (1-self.beta2)*(grads[key]**2) self.m[key] += (1 - self.beta1) * (grads[key] - self.m[key]) self.v[key] += (1 - self.beta2) * (grads[key]**2 - self.v[key]) params[key] -= lr_t * self.m[key] / (np.sqrt(self.v[key]) + 1e-7) # correct bias #unbias_m += (1-self.beta1) * (grads[key] - self.m[key]) # correct bias #unbisa_b += (1-self.beta2) * (grads[key]*grads[key] - self.v[key]) #params[key] += self.lr * unbias_m / (np.sqrt(unbisa_b) + 1e-7) class Relu: def __init__(self): self.mask = None def forward(self, x): self.mask = (x <= 0) out = x.copy() out[self.mask] = 0 return out def backward(self, dout): dout[self.mask] = 0 dx = dout return dx class Sigmoid: def __init__(self): self.out = None def forward(self, x): out = sigmoid(x) self.out = out return out def backward(self, dout): dx = dout * (1.0 - self.out) * self.out return dx class Affine: def __init__(self, W, b): self.W =W self.b = b self.x = None self.original_x_shape = None # 重み・バイアスパラメータの微分 self.dW = None self.db = None def forward(self, x): # テンソル対応 self.original_x_shape = x.shape x = x.reshape(x.shape[0], -1) self.x = x out = np.dot(self.x, self.W) + self.b return out def backward(self, dout): dx = np.dot(dout, self.W.T) self.dW = np.dot(self.x.T, dout) self.db = np.sum(dout, axis=0) dx = dx.reshape(*self.original_x_shape) return dx class SoftmaxWithLoss: def __init__(self): self.loss = None self.y = None # softmaxの出力 self.t = None # 教師データ def forward(self, x, t): self.t = t self.y = self.softmax(x) self.loss = self.cross_entropy_error(self.y, self.t) return self.loss def softmax(self, x): x = x - np.max(x, axis=-1, keepdims=True) # オーバーフロー対策 return np.exp(x) / np.sum(np.exp(x), axis=-1, keepdims=True) def cross_entropy_error(self, y, t): if y.ndim == 1: t = t.reshape(1, t.size) y = y.reshape(1, y.size) # 教師dataがone-hot-vectorの場合、正解ラベルのindexに変換 if t.size == y.size: t = t.argmax(axis=1) batch_size = y.shape[0] return -np.sum(np.log(y[np.arange(batch_size), t] + 1e-7)) / batch_size def backward(self, dout=1): batch_size = self.t.shape[0] if self.t.size == self.y.size: # 教師データがone-hot-vectorの場合 dx = (self.y - self.t) / batch_size else: dx = self.y.copy() dx[np.arange(batch_size), self.t] -= 1 dx = dx / batch_size return dx # http://arxiv.org/abs/1207.0580 class Dropout: def __init__(self, dropout_ratio=0.5): self.dropout_ratio = dropout_ratio self.mask = None def forward(self, x, train_flg=True): if train_flg: self.mask = np.random.rand(*x.shape) > self.dropout_ratio return x * self.mask else: return x * (1.0 - self.dropout_ratio) def backward(self, dout): return dout * self.mask # http://arxiv.org/abs/1502.03167 class BatchNormalization: def __init__(self,gamma,beta,momentum=0.9,running_mean=None,running_var=None): self.gamma = gamma self.beta = beta self.momentum = momentum self.input_shape = None # Conv層の場合は4次元、全結合層の場合は2次元 # テスト時に使用する平均と分散 self.running_mean = running_mean self.running_var = running_var # backward時に使用する中間データ self.batch_size = None self.xc = None self.std = None self.dgamma = None self.dbeta = None def forward(self, x, train_flg=True): self.input_shape = x.shape if x.ndim != 2: N, C, H, W = x.shape x = x.reshape(N, -1) out = self.__forward(x, train_flg) return out.reshape(*self.input_shape) def __forward(self, x, train_flg): if self.running_mean is None: N, D = x.shape self.running_mean = np.zeros(D) self.running_var = np.zeros(D) if train_flg: mu = x.mean(axis=0) xc = x - mu var = np.mean(xc**2, axis=0) std = np.sqrt(var + 10e-7) xn = xc / std self.batch_size = x.shape[0] self.xc = xc self.xn = xn self.std = std self.running_mean = \ self.momentum * self.running_mean + (1-self.momentum) * mu self.running_var = \ self.momentum * self.running_var + (1-self.momentum) * var else: xc = x - self.running_mean xn = xc / ((np.sqrt(self.running_var + 10e-7))) out = self.gamma * xn + self.beta return out def backward(self, dout): if dout.ndim != 2: N, C, H, W = dout.shape dout = dout.reshape(N, -1) dx = self.__backward(dout) dx = dx.reshape(*self.input_shape) return dx def __backward(self, dout): dbeta = dout.sum(axis=0) dgamma = np.sum(self.xn * dout, axis=0) dxn = self.gamma * dout dxc = dxn / self.std dstd = -np.sum((dxn * self.xc) / (self.std * self.std), axis=0) dvar = 0.5 * dstd / self.std dxc += (2.0 / self.batch_size) * self.xc * dvar dmu = np.sum(dxc, axis=0) dx = dxc - dmu / self.batch_size self.dgamma = dgamma self.dbeta = dbeta return dx class Convolution: def __init__(self, W, b, stride=1, pad=0): self.W = W self.b = b self.stride = stride self.pad = pad # 中間データ(backward時に使用) self.x = None self.col = None self.col_W = None # 重み・バイアスパラメータの勾配 self.dW = None self.db = None def forward(self, x): FN, C, FH, FW = self.W.shape N, C, H, W = x.shape out_h = 1 + int((H + 2*self.pad - FH) / self.stride) out_w = 1 + int((W + 2*self.pad - FW) / self.stride) col = im2col(x, FH, FW, self.stride, self.pad) col_W = self.W.reshape(FN, -1).T out = np.dot(col, col_W) + self.b out = out.reshape(N, out_h, out_w, -1).transpose(0, 3, 1, 2) self.x = x self.col = col self.col_W = col_W return out def backward(self, dout): FN, C, FH, FW = self.W.shape dout = dout.transpose(0,2,3,1).reshape(-1, FN) self.db = np.sum(dout, axis=0) self.dW = np.dot(self.col.T, dout) self.dW = self.dW.transpose(1, 0).reshape(FN, C, FH, FW) dcol = np.dot(dout, self.col_W.T) dx = col2im(dcol, self.x.shape, FH, FW, self.stride, self.pad) return dx class Pooling: def __init__(self, pool_h, pool_w, stride=2, pad=0): self.pool_h = pool_h self.pool_w = pool_w self.stride = stride self.pad = pad self.x = None self.arg_max = None def forward(self, x): N, C, H, W = x.shape out_h = int(1 + (H - self.pool_h) / self.stride) out_w = int(1 + (W - self.pool_w) / self.stride) col = im2col(x, self.pool_h, self.pool_w, self.stride, self.pad) col = col.reshape(-1, self.pool_h*self.pool_w) arg_max = np.argmax(col, axis=1) out = np.max(col, axis=1) out = out.reshape(N, out_h, out_w, C).transpose(0, 3, 1, 2) self.x = x self.arg_max = arg_max return out def backward(self, dout): dout = dout.transpose(0, 2, 3, 1) pool_size = self.pool_h * self.pool_w dmax = np.zeros((dout.size, pool_size)) dmax[np.arange(self.arg_max.size),self.arg_max.flatten()] = \ dout.flatten() dmax = dmax.reshape(dout.shape + (pool_size,)) dcol = dmax.reshape(dmax.shape[0] * dmax.shape[1] * dmax.shape[2], -1) dx = col2im(dcol,self.x.shape,self.pool_h,self.pool_w,self.stride,self.pad) return dx if __name__ == '__main__': main()

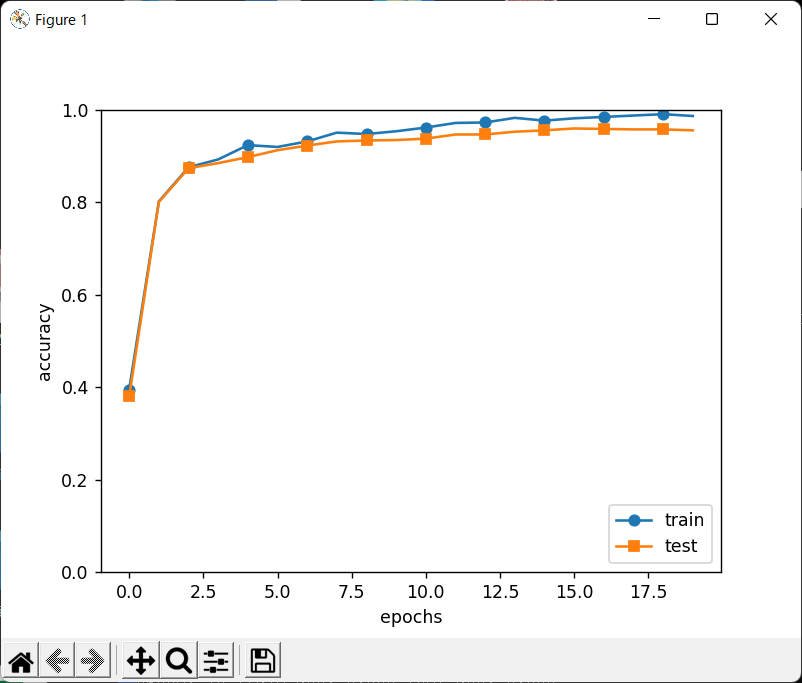

↑こう書くと、↓こう表示されます

(dl_scratch) C:\Users\end0t\tmp\deep-learning-from-scratch\ch07>python foo.py epoch: 1 train acc: 0.395 test acc: 0.38 epoch: 2 train acc: 0.802 test acc: 0.801 epoch: 3 train acc: 0.876 test acc: 0.874 epoch: 4 train acc: 0.893 test acc: 0.885 epoch: 5 train acc: 0.924 test acc: 0.898 epoch: 6 train acc: 0.92 test acc: 0.913 epoch: 7 train acc: 0.932 test acc: 0.923 epoch: 8 train acc: 0.951 test acc: 0.932 epoch: 9 train acc: 0.948 test acc: 0.934 epoch: 10 train acc: 0.954 test acc: 0.935 epoch: 11 train acc: 0.962 test acc: 0.938 epoch: 12 train acc: 0.972 test acc: 0.947 epoch: 13 train acc: 0.973 test acc: 0.947 epoch: 14 train acc: 0.983 test acc: 0.953 epoch: 15 train acc: 0.977 test acc: 0.956 epoch: 16 train acc: 0.982 test acc: 0.96 epoch: 17 train acc: 0.985 test acc: 0.959 epoch: 18 train acc: 0.988 test acc: 0.958 epoch: 19 train acc: 0.991 test acc: 0.958 epoch: 20 train acc: 0.987 test acc: 0.956 =============== Final Test Accuracy test acc:0.956 Saved Network Parameters!